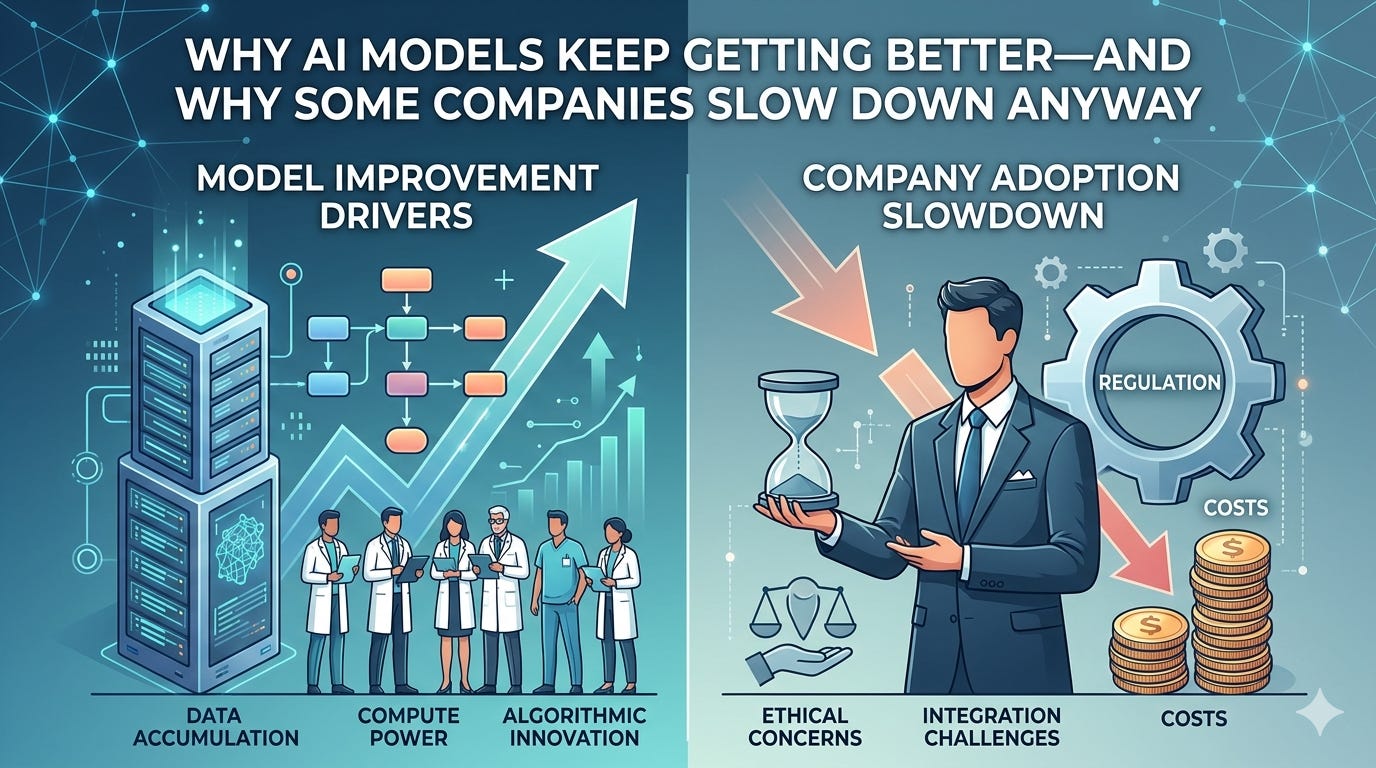

Why models keep getting better—and why some companies slow down anyway

What’s pushing quality up

Every few months, your favorite AI feels sharper: fewer blank stares, better follow‑ups, more useful code and summaries. That sense of acceleration isn’t an illusion. It’s the product of two flywheels spinning faster together: scale and feedback.

What’s pushing quality up

More compute, smarter compute. Training runs keep getting larger, but also more efficient: better parallelization, sparsity/MoE layers, and optimized inference stacks mean models learn more from each unit of compute.

Better data, not just more data. Curated high‑quality corpora, reinforcement learning from human and AI feedback, synthetic data to fill gaps, and rigorous deduplication all reduce noise and sharpen reasoning.

Tools and memory. Retrieval, code execution, browsing, and external tools let models offload facts and math, so they can spend their “intelligence budget” on reasoning. Long‑context windows and session memory smooth multi‑step work.

Architecture and training refinements. From improved tokenization and instruction tuning to preference optimization and safety‑aware training, small engineering choices add up to big step‑ups in reliability.

Tighter evaluation loops. Continuous red‑teaming, domain benchmarks, and user telemetry expose failure modes faster, feeding the next training cycle.

So why the hesitation to release the next big thing? When capabilities climb, the blast radius of mistakes grows. That shifts the job from “make it smarter” to “prove it’s safe, predictable, and useful at scale.” Companies sometimes delay or stage releases for a mix of reasons:

Safety thresholds. As models get better at code, persuasion, or specialized domains, dual‑use risks rise. Teams run capability and misuse evaluations (e.g., cybersecurity, bio info hazards, autonomy tests) and won’t ship until mitigations—guardrails, monitoring, rate limits—actually work.

Responsible scaling policies. Anthropic, for example, has published policies that gate each capability jump behind extra testing and controls. If a next model (sometimes rumored under names like “Mythos”) exists, a slower rollout can reflect those commitments rather than simple caution.

Unpredictability at the frontier. New behaviors can emerge late in training. Extra red‑teaming, system cards, and staged access (research preview, enterprise first, then wider release) reduce surprises.

Product readiness, not just benchmarks. It’s one thing to ace evals; it’s another to deliver consistent latency, low hallucination rates, tool reliability, and clear failure modes in real products.

Cost and reliability. New models can be expensive to serve. Teams optimize inference, memory, batching, and availability so quality gains don’t come with unusable costs or downtime.

Legal and reputational risk. Stronger models amplify the stakes of copyright, privacy, and policy violations. Delays buy time for audits, policy tuning, and partner coordination.

Market strategy. Sequenced launches let companies educate users, update pricing, and protect developer ecosystems without breaking apps overnight.

Put simply: the same forces that make models rapidly better also make the consequences of errors more serious. The responsible response isn’t panic; it’s pacing—prove the guardrails, stress‑test the edges, and widen access in steps.

The likely near future Expect two tempos at once. Under the hood, fast iteration will continue: smarter tool use, longer context, better multimodal reasoning, and cheaper inference. Publicly, you’ll see more staged rollouts, detailed safety reports, and opt‑in previews. That tension—speed inside, prudence outside—is a sign the field is maturing, not stalling.