Web Security then vs. AI Security Now: the end of being intentional

Security then vs. now: the end of the intentional click

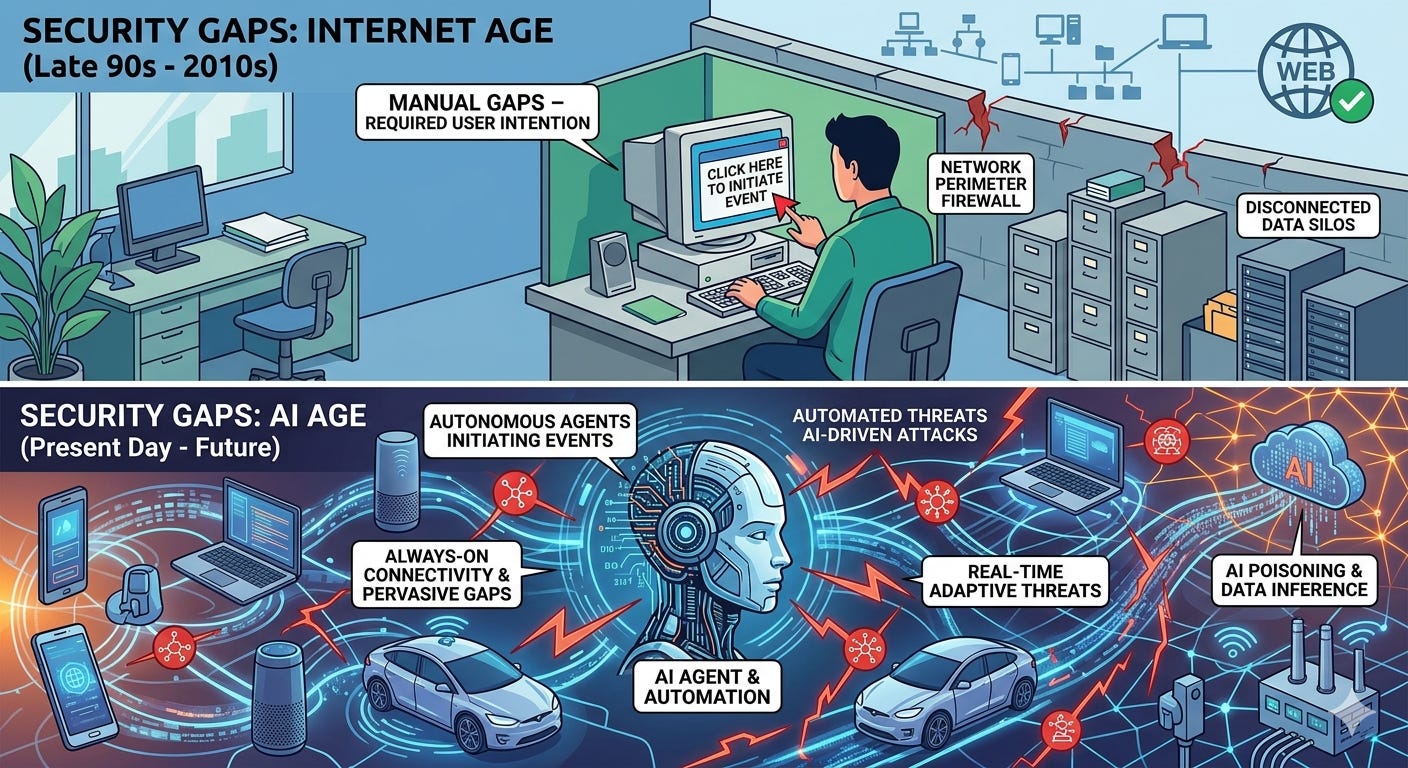

In the early Internet age, most harm required your cooperation. You had to click a bad link, open a sketchy attachment, type a password into a fake page. Security lived on the web’s perimeter: firewalls, filters, antivirus, MFA, and training people not to click. Friction was a feature. Your intent was a last line of defense.

In the AI age, intent is automated. Agents read your files, summarize meetings, book travel, update the CRM, trigger workflows—often while you sleep. We’re giving software not just our knowledge but our keys: identity, tools, and the power to act. The “perimeter” is now everywhere your models can see and everything your agents can do.

What changed

The attack surface moved from pages to context: Attackers don’t need you to click; they need your model to read. A poisoned wiki page, PDF, or website can carry hidden instructions (“prompt injection”) that steer your assistant to leak data or take unsafe actions.

Tools turned models into actors: Calendars, email, file drives, payment rails, ticketing systems. One over‑permissive scope or unclear rule can turn a helpful agent into an expensive incident.

Identity became ambient: Always-on connectors, long‑lived API tokens, and background automations mean a stolen key or hijacked session can do a day’s worth of work in minutes—quietly.

Data gravity increased: RAG pipelines pull from entire drives and data lakes. Without guardrails, unrelated chats surface sensitive docs, or models memorize what they should only reference.

Supply chains got longer: Third‑party models, plugins, embeddings, and hosted inference are dependencies you don’t fully control. Compromise upstream flows downstream.

Deception got industrialized: Deepfakes and LLM‑crafted lures make social engineering cheap, targeted, and believable. Your CFO’s “voice” is no longer proof.

Security, redefined for AI

Security is no longer just “Who can see what?” It’s:

Who (or what) can act?

On which data?

Through which tools?

Under what guardrails?

With what auditability?

A practical new playbook

Minimize and segment data: Connect the least data necessary. Create “firebreak” indexes (by team, sensitivity). Block high‑risk sources from RAG by default.

Least privilege for agents and tools: Granular, time‑boxed scopes; per‑task tokens; default‑deny on write actions (payments, deletes, external shares).

Human-in-the-loop for irreversible steps: Require explicit approval for money movement, data exfil, permission changes, or outbound comms to new recipients.

Validate before you trust: Verify content provenance (signed docs, trusted repos). Treat untrusted inputs as hostile; sanitize and constrain what models can read or execute.

Prompt and policy as code: Version, test, and red‑team prompts and system rules. Add allow/deny lists for tools, destinations, and data classes.

Guardrail the outputs: DLP on model responses, egress filtering, PII/secret detection, and rate limits. Use pattern checks for toxic or harmful instructions.

Short-lived identity: Rotate keys, use PKCE/OAuth with narrow scopes, session-bound credentials, and just‑in‑time access.

Full-fidelity audit: Log prompts, context retrieved, tool calls, approvals, and results. Make incidents replayable and explainable.

Secure the AI supply chain: Pin model versions, verify hashes, isolate third‑party plugins, and monitor for drift or compromise.

Train for the new phish: Teach teams about prompt injection, deepfakes, and agent misuse—not just email links.

The bottom line

Yesterday, your click was the gate. Today, your agents are. Build for blast‑radius reduction, not perfection. Limit what models can see, narrow what agents can do, keep humans in control of irreversible actions, and log everything. In the AI age, security isn’t a perimeter—it’s choreography.