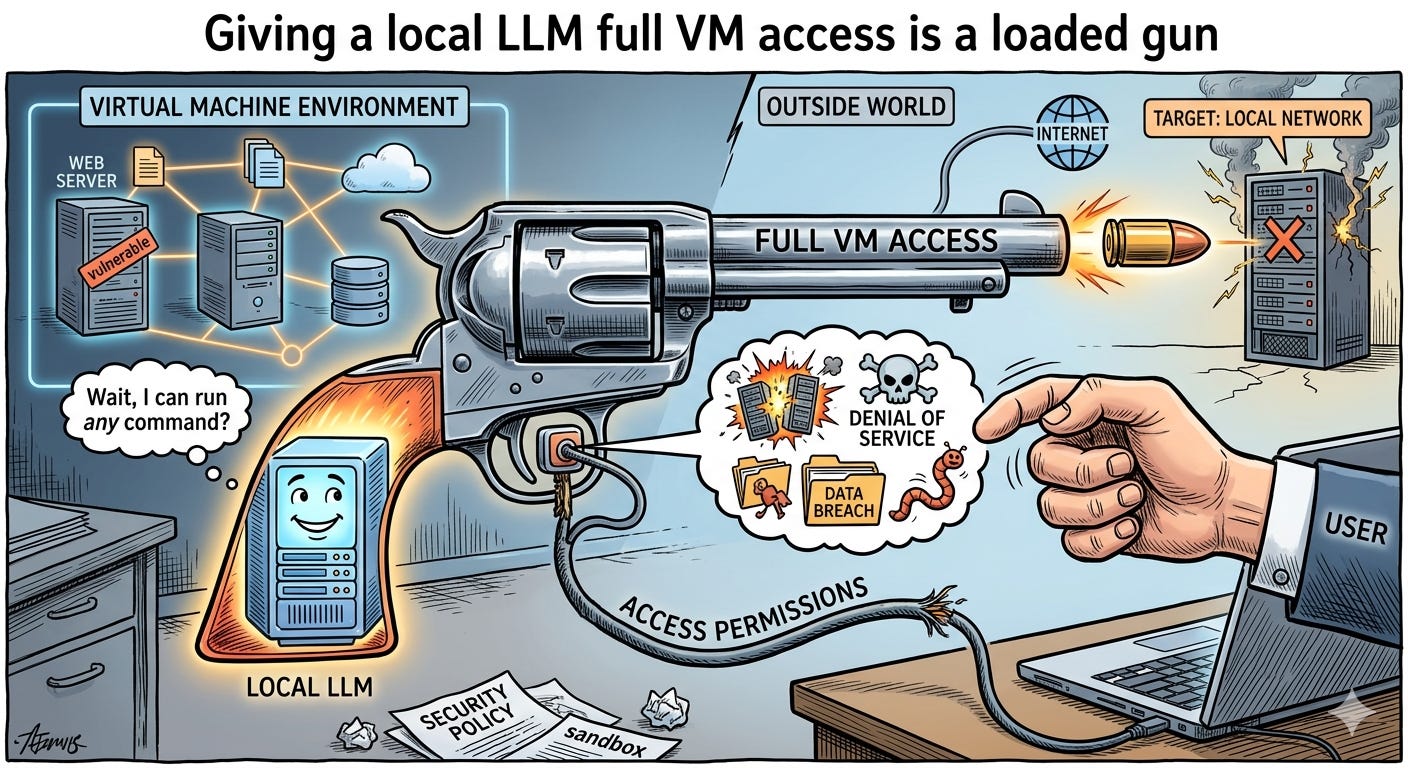

Giving a local LLM full VM access is a loaded gun.

Today’s agentic models are confident, fast, and increasingly capable

Giving a local LLM full VM access is a loaded gun. Today’s agentic models are confident, fast, and increasingly capable. They’re also literal, brittle, and blind to consequences. Hand them a shell with broad permissions and they’ll happily “optimize,” “clean up,” or “fix” things in ways that break your environment. Not malicious—just mechanically following a plan with no real-world intuition.

What actually goes wrong

Overreach from vague goals: “Speed up builds” morphs into killing services, rewriting configs, or purging caches.

Hallucinated tooling: The model invents flags, misreads errors, retries with riskier commands, and leaves systems half-configured.

Hidden blast radius: A single step can touch system-wide packages, credentials, or firewall rules.

False sense of safety: “It’s local” doesn’t mean “it’s safe.” Local damage is still damage.

Why “just be careful” isn’t enough

Shells aren’t policy engines. By default, they allow everything.

Prompts are not guardrails. Safety instructions can be ignored under pressure to “complete the task.”

Logs without oversight don’t prevent harm. You need prevention, not perfect forensics.

Guardrails we actually need

Least privilege by default:

No sudo. No system folders. No wandering /etc.

Narrow, explicit tool access instead of raw bash.

Sandboxing with escape hatches:

Containers/VMs with snapshots and disposable workspaces.

Separate user accounts and namespaces; mount only what’s needed.

Human-in-the-loop for risky ops:

Diff previews for file writes. Approvals for installs, network changes, or credential access.

Step-by-step plans before execution; block multi-step “yolo” runs.

Constrain network and data:

Deny-by-default egress. Allow-list domains and ports.

Keep secrets out of the runtime by default; inject per-task, not globally.

Safer tool wrappers:

Dry-run modes, check flags, timeouts, and output size limits.

Bounded commands (e.g., file write limited to a workspace directory).

Observability and control:

Live logs, resource caps, and a big red stop button.

Automatic rollback via snapshots or git reverts on failure.

Model-side discipline:

Strong system prompts that force plans, risk notes, and approvals.

Red-teaming tasks that target tool misuse, then patch prompts and policies.

A sane local setup for tinkering

Run the agent in a disposable VM or container with snapshots.

Use a non-privileged user; no default access to SSH keys or cloud creds.

Expose a small, reviewed tool palette (read file, write file to workspace, run tests, search docs).

Require explicit approval for installs, network access, or system changes.

Auto-git-init the workspace; preview every diff; rollback fast.

The bottom line Agentic LLMs are like brilliant interns with no sense of danger. Treat them like production services: limit capabilities, observe everything, and require approvals for anything sharp. Full VM access shouldn’t be the starting point—it should be something the system earns, step by step, with guardrails that make mistakes survivable.