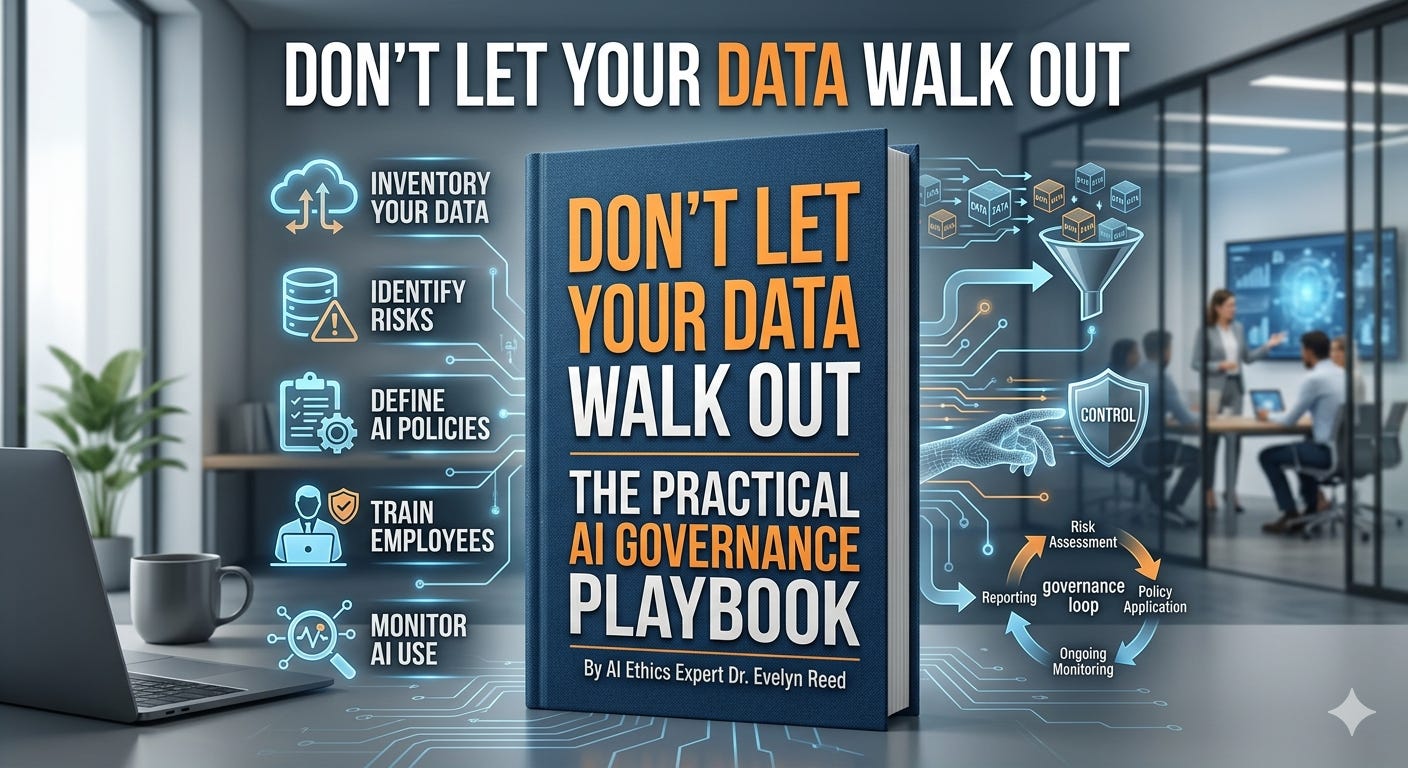

Don’t let your data walk out with the prompt: a practical AI governance playbook

The pace is breathtaking

The pace is breathtaking. One day your team is piloting Claude Code from Anthropic.

The next, engineers are pasting stack traces into OpenAI Codex and autosaving outputs to half a dozen internal tools.

Amazing productivity—until confidential roadmaps, customer PII, or M&A decks slip into prompts and “competitive intelligence” strolls out the door.

Here’s a concise, real-world approach to keep speed without leaks.

Start with policy that’s usable in the flow of work

Classify the crown jewels: Define what is Restricted (e.g., PII, credentials, unreleased financials, source code, M&A, regulated data), Internal, and Public. Map examples employees see daily.

Acceptable use (plain-English): Do not paste customer PII, credentials, security keys, unreleased product or financials, or legal/HR matters into any AI tool. Only use approved models, through approved gateways. Turn off training/retention where possible.

Model/data boundaries: Default to enterprise endpoints with retention disabled and no model training on your data. Prefer private networking and customer-managed encryption keys.

Access and approvals: Require SSO/MFA, least-privilege roles, and an allowlist of approved models/tools. Sensitive datasets need data steward approval.

Logging and accountability: Log prompts, responses, attachments, and model/version. Create auditable trails for who accessed what, when, and why.

Third-party risk: Vendor assessment must cover data flows, retention, subprocessors, residency, breach response, and IP terms.

Enforce with technical guardrails

Enterprise AI platforms with privacy controls:

OpenAI ChatGPT Enterprise and API with data controls

Anthropic Claude for Work/Teams and API with no-training options

Azure OpenAI with private networking and key management (http://azure.microsoft.com/products/ai-services/openai-service)

Amazon Bedrock with VPC and KMS (http://aws.amazon.com/bedrock)

Google Vertex AI with CMEK and private service connect (http://cloud.google.com/vertex-ai)

LLM gateways and policy enforcement:

Cloudflare AI Gateway for routing, usage caps, and observability

Azure API Management as a central LLM proxy with auth and quotas (http://azure.microsoft.com/products/api-management)

NVIDIA NeMo Guardrails for prompt/response policy and safety filters (http://developer.nvidia.com/nemo-guardrails)

Lakera for prompt injection and sensitive-data detection.

Data discovery and DLP (prevent sensitive data in prompts and outputs):

Microsoft Purview (http://www.microsoft.com/security/business/information-protection/purview)

Google Cloud DLP (http://cloud.google.com/dlp)

AWS Macie (http://aws.amazon.com/macie)

Nightfall AI for SaaS DLP

Data governance and access control:

Collibra , BigID, Immuta, OneTrust for catalogs, policies, and approvals

Okta for SSO/MFA and SailPoint for identity governance

Network and egress control (stop uploads to unapproved AI sites):

Netskope and Zscaler CASB/SWG

Secrets and keys:

HashiCorp Vault to prevent keys in prompts and code (http://www.hashicorp.com/products/vault)

Monitoring and forensics:

Splunk , Datadog for logs/alerts; Varonis for data access analytics

Make it real with an operating model

Create an AI Review Board: security, legal, privacy, data, and product. They own the allowlist, review new tools, and adjudicate edge cases fast.

Provide an approved toolbox: a single chat/workbench that routes through your gateway, with built-in DLP and model choices. Include GitHub Copilot (http://github.com/features/copilot) or Claude Code via approved integrations.

Train and test: short mandatory training on what not to paste; quarterly red-team exercises to probe prompt injection and data exfiltration.

Measure and iterate: track adoption, blocked exfil attempts, and incidents. Adjust policies and allowlist based on evidence, not fear.

Copy/paste starter policy language “Use only company-approved AI tools via the AI Gateway. Do not input customer PII, credentials, secrets, unreleased financials, source code, legal or HR data. AI outputs are drafts and must be reviewed before use. All usage is logged and may be audited. Violations may result in access removal.”

The goal isn’t to slow people down—it’s to make the safe path the fastest path. With clear rules, a controlled entry point, and the right guardrails, you can scale AI across the org without letting your competitive edge leak out with the next prompt.