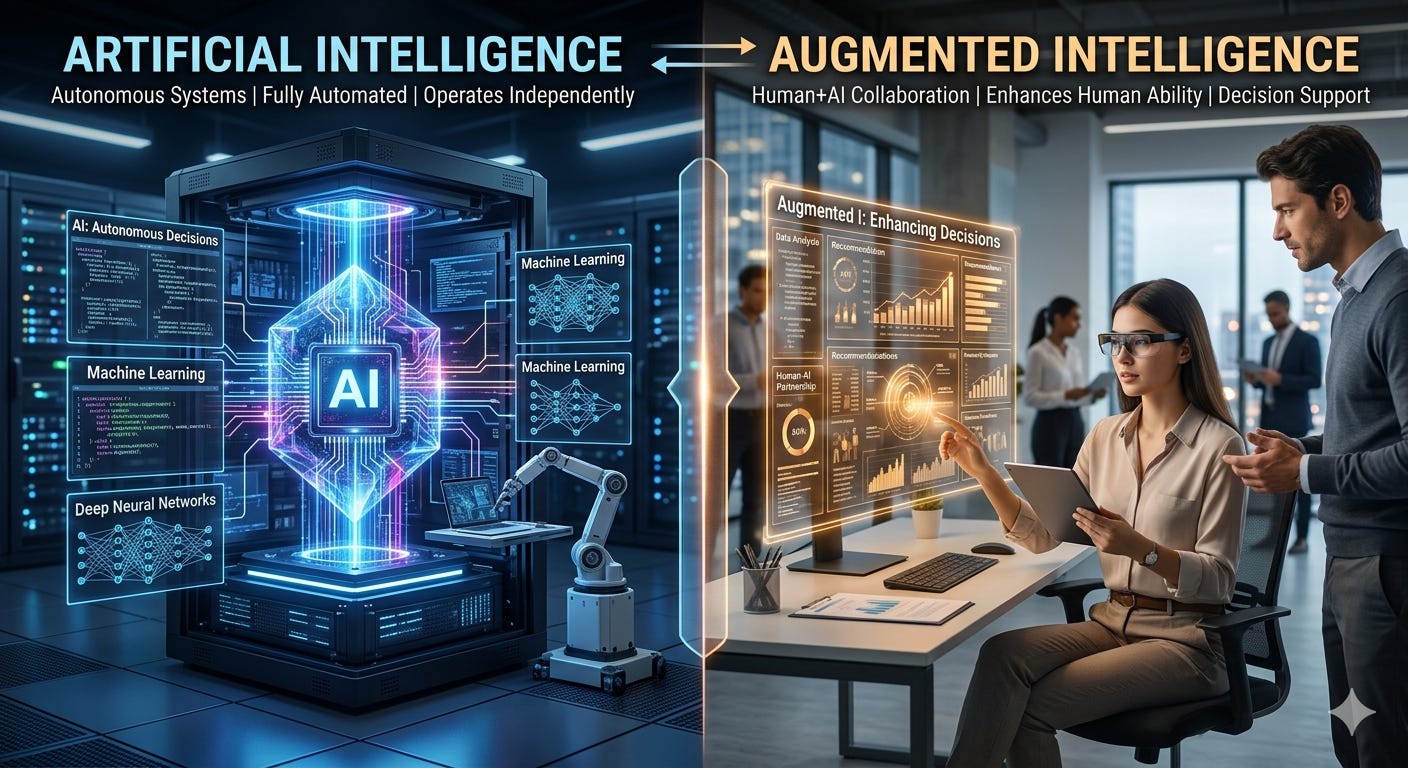

ArI vs AuI - Artificial vs Augmented Intelligence

AI Is Augmented Intelligence: Context Is the Hidden Ingredient

Ask a model to “write a contract” and it will produce a contract. Ask it to write your company’s vendor agreement for a healthcare pilot in Texas with a 90‑day termination clause and HIPAA BAAs, and you’ll get something you can actually use. The difference isn’t magic—it’s context.

Artificial intelligence works best as augmented intelligence: a system that amplifies human judgment with patterns learned from data. Without rich context—either provided during the interaction or baked into the model via training—AI defaults to generic, plausible answers. To move from “demo wow” to dependable work, you need to engineer context on purpose.

What “context” really means

Task specifics: who, what, where, when, why, and for whom. Goals, audience, tone, success criteria, constraints.

Domain knowledge: internal policies, product catalogs, ontologies, glossaries, regulatory rules.

History and preferences: prior decisions, style guides, user choices, recurring exceptions.

Tools and environment: calendars, CRMs, code repos, APIs, calculators—anything the model can call to verify or act.

Fresh signals: current inventory, prices, weather, incidents—facts that change and cannot be memorized.

How to feed context to AI

During the interaction

Structure the ask. Provide inputs as fields, not a wall of prose. Give examples of good output and edge cases to avoid.

Ground with retrieval. Use retrieval‑augmented generation (RAG) to attach the most relevant pages, tickets, or code snippets to the prompt.

Use tool calling. Let the model fetch real prices, perform calculations, or check policy via APIs rather than inventing answers.

Add lightweight memory. Store user preferences and prior outputs with consent, and surface them when relevant.

Before the interaction

Curate and label data. High‑signal documents with clear metadata beat a warehouse of noise.

Fine‑tune selectively. When style, format, or domain nuance matters, small targeted fine‑tunes can lock in consistency.

Build a shared schema. Controlled vocabularies and IDs prevent drift (is it “customer,” “client,” or “account”?).

After the interaction

Close the loop. Capture human edits, reasons for rejection, and outcomes as training signals.

Evaluate continuously. Track accuracy, helpfulness, latency, and hallucination rates across realistic test sets.

Where this pays off

Customer support: With policy pages, warranty rules, and the customer’s history retrieved into the prompt, responses become precise—and safely automated.

Clinical documentation: When models see problem lists, meds, and vitals, notes get accurate and concise; clinicians stay in the loop to validate.

Coding copilots: With repo context, issue threads, and architecture docs, suggestions align with your patterns instead of Stack Overflow averages.

Sales and ops: Proposals, forecasts, and playbooks improve when grounded in current pricing, inventory, and territory constraints.

Guardrails that matter

Provenance: Show sources and link back. Let users verify.

Privacy by design: Minimize, encrypt, and consent. Separate sensitive data from model training unless explicitly permitted.

Limits of the window: Context windows are finite; summarize, chunk, and index smartly to avoid drowning the model.

Bias and coverage: Audit which perspectives your data amplifies—and which it misses.

The takeaway AI doesn’t replace expertise; it scales it. Your prompt is a spec. Your data is fuel. Your tools are the hands. And your judgment is the safety system. Treat context as a first‑class product, and “artificial” intelligence becomes authentically useful.