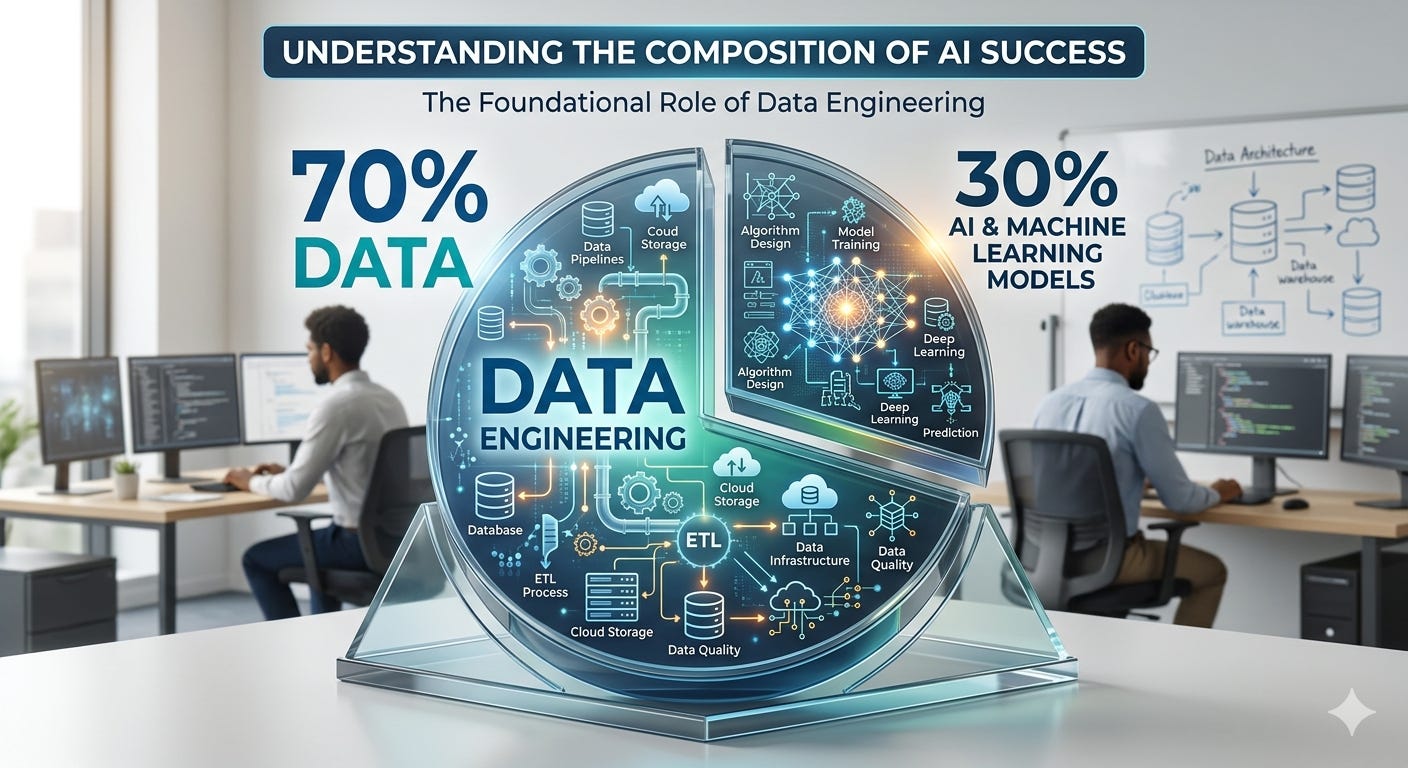

70% of AI is data engineering

We didn’t miss the launch because the model underfit.

70% of AI is data engineering

We didn’t miss the launch because the model underfit. We missed it because a source system renamed a column, a timestamp drifted by eight hours, and a handful of mislabeled records taught the model the wrong lesson. If you’ve shipped AI in the wild, this story feels familiar.

The hard truth: models are the concert; data engineering is the soundcheck, the stage, the power, and the roadies. Without it, nothing plays.

Why the 70% feels right

Most business problems aren’t limited by model capacity; they’re limited by data quality, coverage, and access.

Iteration speed lives in your pipelines. Clean, well-modeled data means faster experiments and fewer mysterious regressions.

Real ROI comes from repeatability. You need lineage, monitoring, and governance to scale beyond one cool demo.

What “data engineering” means in AI

Ingestion and contracts: Know what data you expect, how often, and in what shape. Enforce schemas. Fail loud.

Transformation: Normalize, dedupe, handle missingness, build features, and document assumptions. Make semantics explicit.

Labeling and supervision: Define gold standards, sampling strategies, and inter-annotator agreement. Labels are code; version them.

Evaluation data: Curate stable, representative test sets. Build canaries for edge cases. Freeze them. Guard them.

Storage and access: Choose the right store (warehouse, lakehouse, vector DB). Optimize for retrieval patterns, not hype.

Observability: Monitor freshness, drift, skew, and leakage. Alert on data, not just model metrics.

Governance: Lineage, PII handling, consent, retention, and reproducibility. If a regulator asks “how,” you should have an answer.

In the LLM era, it’s even more true

Retrieval beats retraining. Your RAG quality hinges on chunking, metadata, embeddings, and freshness SLAs.

Prompt quality depends on structured context. If your documents are messy, your outputs will be messy.

Evals are datasets, not vibes. Build automatic checks for grounding, hallucination, and safety using held-out prompts.

Logging is a data pipeline. Store prompts, contexts, tool calls, and outcomes with trace IDs. Close the loop back to training.

A simple operating checklist

Define data contracts with source teams. Treat breaking changes as production incidents.

Build a semantic layer. Human-readable definitions for “customer,” “active,” “churn,” etc.

Version everything: datasets, labels, features, prompts, and evals. Tag releases like software.

Automate quality gates: schema checks, null thresholds, distribution drift, and duplicate detection.

Separate truth from convenience. Maintain clean source tables; materialize model-ready features downstream.

Invest in feedback capture. Tie human corrections and user behavior back into labeled datasets.

Make evaluation a first-class pipeline. Nightly runs on stable suites; dashboards that block deploys on regressions.

Metrics that matter more than your leaderboard

Freshness: How stale is the data your model sees?

Coverage: Do you have enough examples across critical segments and edge cases?

Label health: Agreement rates, ambiguity hotspots, and label drift over time.

Data-to-deploy latency: Time from new data arriving to model reflecting it.

Issue MTTR: How quickly can you detect and fix a broken pipeline?

Here’s the shift: treat data like product, not exhaust. When you do, your models get simpler, your experiments get faster, and your results get boringly reliable—which is where the real value is.

Yes, the last 30% still matters. Architecture choices, hyperparameters, and clever prompts can move needles. But if you want AI that ships, sticks, and scales, hire for data engineering, design for data, and measure what your model actually eats.